by Curtis Brown

Introduction

Back in July 2014, my illustrious colleague, Steve Dunne wrote about a Proof of Concept on his experiences with Nvidia based GPU accelerated graphics in a VMware Horizon View environment (see

Horizon View 3d a client engagement). But as with all things in the wonderful world of technology, things move on.

In the case of 3D acceleration, Nvidia have made two significant advances. The first is GRID, and the second is the new Tesla M60/M6 GPU adapters. This blog post looks at these and my own experiences implementing them in a proof of concept.

What is GRID?

Originally, it referred to the combination of a software management layer and the GRID K1 or GRID K2 GPU boards. These were released with support on vSphere 5.1 and 5.5 allowing VDI desktops to be provided with graphics acceleration. Initially, this operated in two forms, vDGA (where a GPU is dedicated to a single VM) or vSGA (where the VMware driver offloads to the Nvidia adapter).

These both had limitations. vDGA is fast as a thief, however the VM is hard-pinned to a host and it’s a 1:1 mapping of VM to physical GPU, impacting scaling considerably. vSGA was great for scaling, but didn’t provide great performance – suitable for lightweight use cases wanting some graphics – a better Aero interface or browser rendering.

The GRID software layer adds a third option that provides more flexibility. The GRID software is installed (as a VIB on the ESXi host) and presents the capability of adding a vGPU to a VM. When applied to a VM, the administrator can select a Profile. These profiles are largely based around how much video RAM is assigned to the VM. With GRID 2.0 however, this functionality also expands to features too.

For example, in 2.0, there are Quadro validated profiles specifically optimized for CAD and Business profiles for more general purpose. This is akin to buying a Quadro adapter or a Geforce adapter for a physical machine.

In GRID 2.0, when paired with the M60 adapter the profiling is both flexible and powerful. It provides the ability to share resources between VMs, while still offering the capability of high end performance – essentially vDGA – but without the complex configuration of pass-through. Unlike vDGA, a GRID VM can be cold migrated to a second host relatively easily.

New Hardware - M6 & M60

Nvidia recently released two new adaptors based on their Tesla GPU core. The M6 is an MXM formatted mezzanine card designed for blade servers while the M60 is a full PCIe card for traditional servers. The M6 has a single GPU while the M60 has two, larger GPUs.

So, how do they compare?

| GRID K1 | GRID K2 | Tesla M6 | Tesla M60 |

| GPU (CUDA Cores) | 4 (4x192) | 2 (2x1536) | 1 (1536) | 2 (2x2048) |

| VRAM | 4x4GB | 2x4GB | 1x8GB | 2x8GB |

| vDGA users | 4 | 2 | 1 | 2 |

| GRID | 1.0 | 2.0 |

| GRID 1GB Profile | 16 | 8 | 8 | 16 |

| GRID 2GB Profile | 8 | 4 | 4 | 8 |

| GRID 4GB Profile | 4 | 2 | 2 | 4 |

| GRID 8GB Profile | - | - | 1 | 2 |

Note that the 8GB Quadro profile on GRID 2.0 also exposes CUDA and OpenCL allowing the card to be used for compute acceleration too – this might be useful outside of VDI provisioning…

Oh, and GRID 2.0 adds 4K display support too – up to four monitors, depending on the profile.

Onto the PoC…

I was fortunate enough recently to be involved with a Proof of Concept using the Nvidia Tesla M60 on Cisco UCS C240M4 hardware. This isn’t officially supported until Q1 2016, but working with Cisco and Nvidia, we stood up what will be essentially the certified configuration (complete with C240 specific cabling, heatsink and air flow baffles and beta release firmware) – quite literally bleeding edge!

All of this was going to be host VMware vSphere 6.0 Update 1 and VMware Horizon View 6.2.

The intention of the PoC was to test a couple of CAD applications for suitability. The test was to include presentation on both Horizon VDI desktops as well as leveraging the new support for 3d accelerated RDSH based applications and desktops.

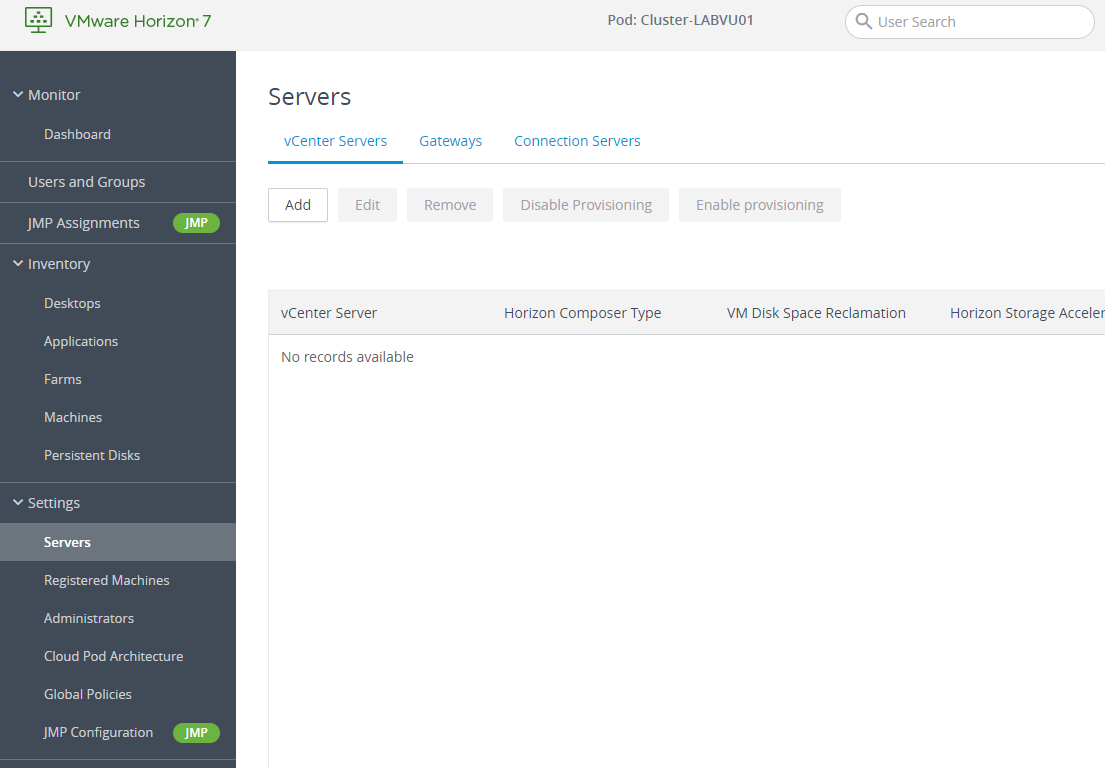

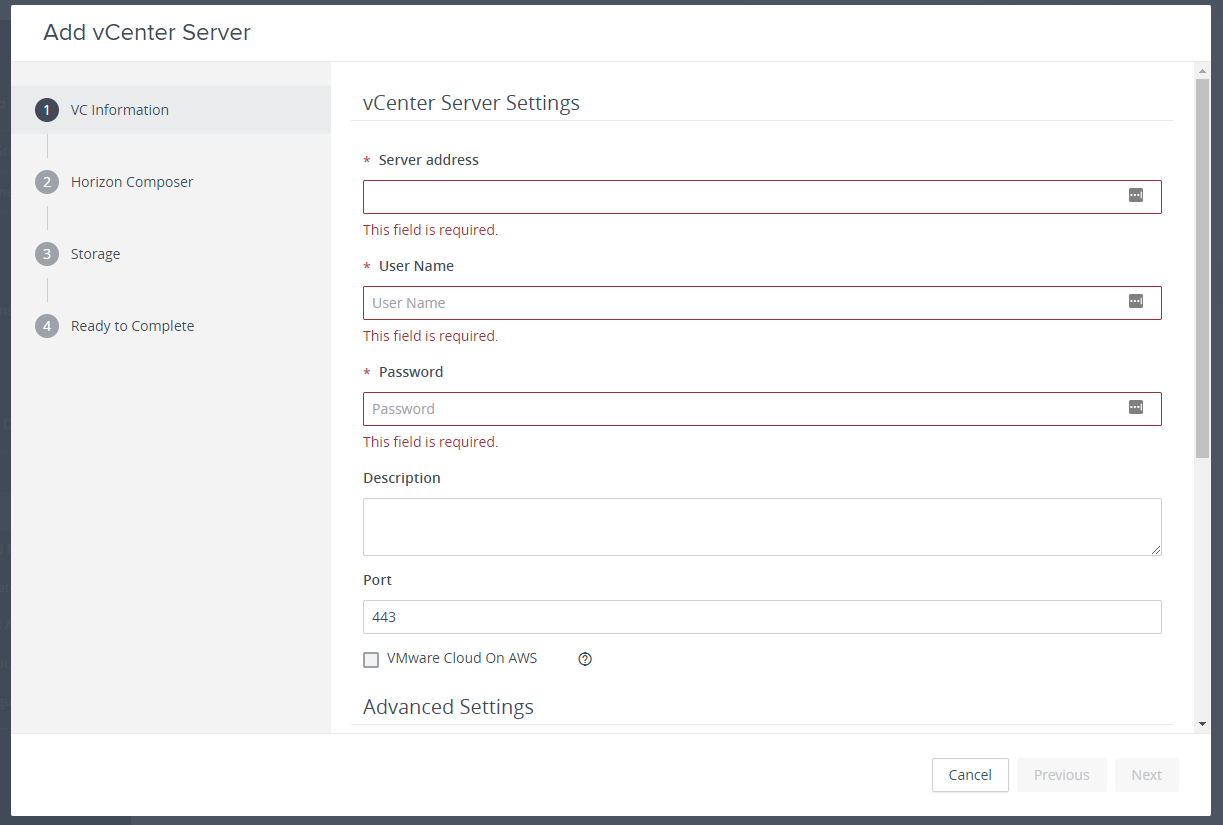

Installing the infrastructure

Installing the infrastructure side of the solution was pretty straight forward. A straight forward build out of vSphere and a small Horizon view environment. Our M60 equipped host was placed in its own cluster.

Laying out the GRID

The next step was acquiring and installing the software components for GRID. Normally, Nvidia software would be acquired through the regular Nvidia.com downloads site, however GRID 2.0 is different. You need to go through a registration process first. Once you’ve done this, you need to download three items:

- The Nvidia GPU Mode Switch tool

- NVidia GRID 2.0 software package

- Nvidia GRID 2.0 License server.

GPU Mode Switch

The Tesla cards can operate in one of two native modes – Compute and Graphics. The default is the former, but for vSphere, we need the latter. To switch modes, we use the GPU Mode Switch tool. The GPU mode switch tool for a VMware environment is provided on an ISO image that boots into a Linux shell. We boot the host from the ISO image and run the following:

- To check the current mode run

gpumodeswitch --listgpumodes - If the mode needs to be changed to graphics, run

gpumodeswitch --gpumode graphics

Then reboot the server into ESXi. You’re then good to go on installing the VIB containing the drivers and management software for GRID on ESXi.

Installing the VIB

This is relatively easy. Nvidia provide the VIB package as one of the downloads. You then need to upload this to the ESXi host. I find the easiest method is to upload the VIB to a vSphere datastore using the vSphere client.

From there, you need to use either SSL (or access via the server console) to the ESXi command line. This will need enabling on the host first, and, as we’re all good, security conscious boys and girls, disabling when we’re done. Oh, and put the host into maintenance mode!

We use esxcli to install the VIB –

esxcli software vib install –v //.vib

By default, vSphere balances vGPU resources across GPUs. However, if you mix VMs with different GRID profiles, it may prevent a VM from starting. For example, a pair of VMs are running with a 1GB profile on a host with a (2x GPU) M60 card. Each GPU (with 8GB VRAM) ends up with a single VM. VM no. 3 has the 8GB profile but can’t start as the GPUs each only have 7GB available. To change allocation to one where each vGPU resource is allocated by depth instead, there’s a hidden ESXi host setting to edit:

/etc/vmware/config

Add the following:

vGPU.consolidation=true

Then we reboot the host and take it out of the maintenance mode.

Prep the Nvidia License Server

This is a GRID 2.0 element that is of little technical benefit to the solution, but nonetheless is a requirement of Nvidia. In their wisdom, they sell GRID 2.0 in differing levels of licensing, based on feature set (unlike 1.0 that simply worked on the principle of you got everything with the board).

Regardless, it requires a relatively humble VM running either Windows (7/2008 or later) with a copy of Java or Linux. Installation on Windows is a classic setup executable wizard. One thing to note is that the wizard asks about allowing access through the Windows firewall. The application uses Port 7070 for accessing the license function. Allow this one through. The other is port 8080 for management, don’t do this one – the management page has no security, so you only want to access this from the local OS itself.

Once it’s installed, you need to register the license the server on the Nvidia portal – you need the MAC address of the license server for this. Once registered and the licenses applied, you download a BIN file. This is then registered on the license server –

within 24 hours of generation.

Configuring the VM for View and 3D acceleration.

Again, pretty straight forward for most desktop OS, but a little trickier for RDSH hosts. For regular desktop OS, such as Windows 7, build a VM as before – Install the OS, VMware tools, View agent and then optimise.

One extra thing you’ll need at this point - install either the VMware Horizon View Direct Connect agent or VNC Server within the VM. You’ll need this because later you’ll need to configure the Nvidia Control Panel – but once the Nvidia drivers are installed, you can’t use the console via the vSphere client and RDP won’t work as it uses its own WDM device driver for video. You’ve been warned!

At this point, the cool stuff starts.

First, we shut down the VM. We edit the settings of the VM using the vSphere Web Client (yes – I said the Web client – the old client won’t help here) and add a Shared PCI device. This will show up as NVIDIA GRID and allow you to select a profile as required:

OK this and power up the VM.

At this point, if you log on to the PC and check Windows Device Manager, you’ll see an extra graphics board – probably using the Windows generic adaptor.

We install the GRID specific Nvidia drivers (again, from the GRID portal). This is pretty much the same process as with a traditional desktop with an Nvidia board. Now we reboot the VM.

As stated above, you can’t use the vSphere console – so log on via VNC or Horizon View Direct connect. At this point, you need to register the VM with the license server. This is done via the Licensing tab on the Nvidia Control Panel.

We can now use this VM in Horizon View, including a template for linked clones if we wish. One thing to note is that PCoIP is mandatory when setting up your pool! RDP just won’t cut it as it’ll use its own drivers instead of NVidia.

There are a couple of catches when deploying RDSH Servers. The easy one is that when you install the View Agent on your RDSH server, make sure you select the 3D RDSH option. The next one is only really an issue on Windows 2012. At the last stage, licensing the server for Nvidia, Horizon View Direct Connect considers you as logged in via Remote Desktop, even if the protocol is PCoIP. As such, the Nvidia Control Panel will not function. Use a VNC Server instead.

Closing thoughts

As users demand more and more graphics horsepower from VDI, the proliferation of GPU solutions is likely to increase, hopefully bringing more players into the market and impacting the currently rather high costs. AMD are already starting to offer support for vSGA and vDGA on their FirePro cards – hopefully, they’ll offer an equivalent to GRID in the future.

With respect to GRID 2.0, my opinion is somewhat positive. It’s powerful and relatively straight forward to set up, though the need with GRID 2.0 to deploy the licensing solution adds an otherwise unnecessary layer of complexity and a potential point of failure.

Suffice to say though, if Santa stuffed a Tesla M60 into my no doubt copious pile of Christmas presents, I would be far from unhappy.

About the Author

Curtis Brown joined the Xtravirt consulting team in October 2012. With over 16 years’ experience, Curtis has worked in both private and public sectors on a wide variety of projects including many solutions for server consolidation and VDI, implementation and migration, data centre transformations and virtualisation programmes.

In 2015, Curtis achieved the VMware vExpert award.

Twitter: @curtisbrown01

If you’d like to learn more about VMware Horizon View, 3D acceleration or VDI, please contact us and we’d be more than happy to use our real world deployment experiences to help you.